Computer architecture: Difference between revisions

imported>Eric Evers |

imported>Howard C. Berkowitz (A little more clarification about multicore. Don't we have some multiprocessing architecture articles I can't find?) |

||

| Line 28: | Line 28: | ||

one byte opcodes|publisher= sandpile.org| year=Unknown | url=http://www.sandpile.org/ia32/opc_1.htm | accessdate=2006-04-09}}</ref>. The particular instruction set that a specific computer supports is known as that computer's [[machine language]]. Using an already-popular machine language makes it much easier to run existing software on a new machine; consequently, in markets where commercial software availability is important suppliers have converged on one or a very small number of distinct machine languages. | one byte opcodes|publisher= sandpile.org| year=Unknown | url=http://www.sandpile.org/ia32/opc_1.htm | accessdate=2006-04-09}}</ref>. The particular instruction set that a specific computer supports is known as that computer's [[machine language]]. Using an already-popular machine language makes it much easier to run existing software on a new machine; consequently, in markets where commercial software availability is important suppliers have converged on one or a very small number of distinct machine languages. | ||

More powerful computers such as [[minicomputer]]s, [[mainframe computer]]s and [[Server (computing)|servers]] may differ from the model above by dividing their work between more than one main | More powerful computers such as [[minicomputer]]s, [[mainframe computer]]s and [[Server (computing)|servers]] may differ from the model above by dividing their work between more than one main processor. [[Multiprocessing]] refers to computation with multiple processors; [[multicore processor]]s are primarily ways to package more than one processors onto the same [[integrated circuit]], although some multicore architectures do share on-chip resources among multiple processors.<ref>{{cite web | author=Kanellos, Michael | title=Intel: 15 dual-core projects under way | publisher= CNET Networks, Inc.| year=2005 | url=http://news.com.com/Intel+15+dual-core+projects+under+way/2100-1006_3-5594773.html | accessdate=2006-07-15}}</ref><ref>{{cite web | author=Chen, Anne | title=Laptops Leap Forward in Power and Battery Life | publisher= Ziff Davis Publishing Holdings Inc. | year=2006 | url=http://www.eweek.com/article2/0,1895,1948898,00.asp | accessdate=2006-07-15}}</ref> | ||

[[Supercomputer]]s often have highly unusual architectures significantly different from the basic stored-program architecture, sometimes featuring thousands of CPUs, but such designs tend to be useful only for specialized tasks. At the other end of the size scale, some [[microcontroller]]s use the [[Harvard architecture]] that ensures that program and data memory are logically separate. | [[Supercomputer]]s often have highly unusual architectures significantly different from the basic stored-program architecture, sometimes featuring thousands of CPUs, but such designs tend to be useful only for specialized tasks. At the other end of the size scale, some [[microcontroller]]s use the [[Harvard architecture]] that ensures that program and data memory are logically separate. | ||

Revision as of 11:07, 22 February 2009

Computer architecture is often used to refer to an area of specialization within the academic discipline of computer science. At other times, the phrase computer architecture may be used to refer to a specific machine specification, such as SPARC, Intel X86, PowerPC, or Motorola 680x0. A more precise designation for specifying such machines is instruction set architecture (ISA). In the 1980's, when computer architecture programs first began within computer science departments at various universities, heavy emphasis was placed on research related to designing better ISA's. Nowadays, the industry has converged on a few different ISA's and tends to rebuild them over and over again with faster hardware, so computer architecture has moved to focus more on the ways to speed up computer hardware and system-level software than on designing new ISA's. The academic discipline of computer architecture thus tends to overlap somewhat with fields called computer engineering, or the like, that usually are located within the academic discipline of electrical engineering. The field known as computer architecture may touch all aspects of how specific computers can be specified, built, and verified (tested).

This article will discuss the basics of computer organization, and related articles will expand on each basic part, since the design of computers involves a broad and complex set of technologies.

Basics of computer organization

The basic building blocks of an electronic, general-purpose computer are the processor, the memory, and the input and output devices (collectively termed I/O). Each of these building blocks is connected to a shared "bus", which is a bundle of wires for conducting electrical signals equivalent to binary 0's or 1's. In this way, binary numbers (the "data" to be manipulated by the processor) can be transmitted from memory to processor, where it is changed and then the result moved back into memory. Later, from the memory, the data can then be transmitted over the bus to an output device such as a printer.

Of course, since there was typically just one bus, each part of the computer had to wait its turn, and everything had to happen in the correct order over time. The movement of electrical signals ("information") through the building blocks of the computer is synchronized and coordinated by a special circuit, called the Control unit, by means of an electrical signal called a clock which the Control units sends to all the other parts of the computer. The Control unit is usually part of the processor.

The first electronic, general-purpose computers had to be instructed how to manipulate data by physically rewiring the processor of the computer, while the memory of the computer contained the data to be manipulated. But soon a huge conceptual breakthrough was made during the building of the Eniac in the 1940's. Eniac designers realized that a computer could be designed to store both machine instructions and the data to be manipulated in the computer's memory bank. This concept immediately became adopted in all subsequent computer designs and is still used today. Although he did not invent the concept, mathematician John von Neumann was the first to document it, and so sometimes the phrase Von Neumann architecture (or Von Neumann computer) has been used to describe putting the program, as well as the data, in a computer's memory. A computer's memory can be viewed as a list of cells, each with a unique, numbered "address". Each cell can store a small, fixed amount of information (a binary number). This binary number can either be an instruction, telling the computer what to do, or data which the computer is to process according to the "program" or instructions. In principle, any cell can be used to store either instructions or data; in practice, computers are designed to help programmers make sure that instructions and data, while both stored somewhere in the memory, are not accidentally mixed up.

The ALU is in many senses the heart of the computer. It is capable of performing two classes of basic operations. The first is arithmetic operations; for instance, adding or subtracting two numbers together. The set of arithmetic operations may be very limited; indeed, some designs do not directly support multiplication and division operations (instead, users support multiplication and division through programs that perform multiple additions, subtractions, and other digit manipulations). The second class of ALU operations involves comparison operations: given two numbers, determining if they are equal, or if not equal which is larger.

The I/O systems are the means by which the computer receives information from the outside world, and reports its results back to that world. On a typical personal computer, input devices include objects like the keyboard and mouse, and output devices include computer monitors, printers and the like, but as will be discussed later a huge variety of devices can be connected to a computer and serve as I/O devices.

The control system ties this all together. Its job is to read instructions and data from memory or the I/O devices, decode the instructions, providing the ALU with the correct inputs according to the instructions, "tell" the ALU what operation to perform on those inputs, and send the results back to the memory or to the I/O devices. One key component of the control system is a counter that keeps track of what the address of the current instruction is; typically, this is incremented each time an instruction is executed, unless the instruction itself indicates that the next instruction should be at some other location (allowing the computer to repeatedly execute the same instructions).

Since the 1980s the ALU and control unit (collectively called a central processing unit or CPU) have typically been located on a single integrated circuit called a microprocessor.

The functioning of such a computer is in principle quite straightforward. Typically, on each clock cycle, the computer fetches instructions and data from its memory. The instructions are executed, the results are stored, and the next instruction is fetched. This procedure repeats until a halt instruction is encountered.

The set of instructions interpreted by the control unit, and executed by the ALU, are limited in number, precisely defined, and very simple operations. Broadly, they fit into one or more of four categories: 1) moving data from one location to another (an example might be an instruction that "tells" the CPU to "copy the contents of memory cell 5 and place the copy in cell 10"). 2) executing arithmetic and logical processes on data (for instance, "add the contents of cell 7 to the contents of cell 13 and place the result in cell 20"). 3) testing the condition of data ("if the contents of cell 999 are 0, the next instruction is at cell 30"). 4) altering the sequence of operations (the previous example alters the sequence of operations, but instructions such as "the next instruction is at cell 100" are also standard).

Instructions, like data, are represented within the computer as binary code — a base two system of counting. For example, the code for one kind of "copy" operation in the Intel x86 line of microprocessors is 10110000 [1]. The particular instruction set that a specific computer supports is known as that computer's machine language. Using an already-popular machine language makes it much easier to run existing software on a new machine; consequently, in markets where commercial software availability is important suppliers have converged on one or a very small number of distinct machine languages.

More powerful computers such as minicomputers, mainframe computers and servers may differ from the model above by dividing their work between more than one main processor. Multiprocessing refers to computation with multiple processors; multicore processors are primarily ways to package more than one processors onto the same integrated circuit, although some multicore architectures do share on-chip resources among multiple processors.[2][3]

Supercomputers often have highly unusual architectures significantly different from the basic stored-program architecture, sometimes featuring thousands of CPUs, but such designs tend to be useful only for specialized tasks. At the other end of the size scale, some microcontrollers use the Harvard architecture that ensures that program and data memory are logically separate.

Digital circuits

The conceptual design above could be implemented using a variety of different technologies. As previously mentioned, a stored program computer could be designed entirely of mechanical components like Babbage's devices or the Digi-Comp I. However, digital circuits allow Boolean logic and arithmetic using binary numbers to be implemented using electronic relays. Shannon's famous thesis showed how relays could be arranged to form units called logic gates, implementing simple Boolean operations. Others soon figured out that vacuum tubes — electronic devices, could be used instead. Vacuum tubes were originally used as a signal amplifier for radio and other applications, but were used in digital electronics as a very fast switch; when electricity is provided to one of the pins, current can flow through between the other two.

Through arrangements of logic gates, one can build digital circuits to do more complex tasks, for instance, an adder, which implements in electronics the same method — in computer terminology, an algorithm — to add two numbers together that children are taught — add one column at a time, and carry what's left over. Eventually, through combining circuits together, a complete ALU and control system can be built up. This does require a considerable number of components. CSIRAC, one of the earliest stored-program computers, is probably close to the smallest practically useful design. It had about 2,000 valves, some of which were "dual components"[4], so this represented somewhere between 2,000 and 4,000 logic components.

Vacuum tubes had severe limitations for the construction of large numbers of gates. They were expensive, unreliable (particularly when used in such large quantities), took up a lot of space, and used a lot of electrical power, and, while incredibly fast compared to a mechanical switch, had limits to the speed at which they could operate. Therefore, by the 1960s they were replaced by the transistor, a new device which performed the same task as the tube but was much smaller, faster operating, reliable, used much less power, and was far cheaper.

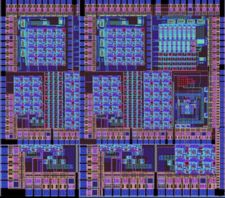

In the 1960s and 1970s, the transistor itself was gradually replaced by the integrated circuit, which placed multiple transistors (and other components) and the wires connecting them on a single, solid piece of silicon. By the 1970s, the entire ALU and control unit, the combination becoming known as a CPU, were being placed on a single "chip" called a microprocessor. Over the history of the integrated circuit, the number of components that can be placed on one has grown enormously. The first IC's contained a few tens of components; as of 2006, the Intel Core Duo processor contains 151 million transistors.[5]

Tubes, transistors, and transistors on integrated circuits can be used as the "storage" component of the stored-program architecture, using a circuit design known as a flip-flop, and indeed flip-flops are used for small amounts of very high-speed storage. However, few computer designs have used flip-flops for the bulk of their storage needs. Instead, earliest computers stored data in Williams tubes — essentially, projecting some dots on a TV screen and reading them again, or mercury delay lines where the data was stored as sound pulses travelling slowly (compared to the machine itself) along long tubes filled with mercury. These somewhat ungainly but effective methods were eventually replaced by magnetic memory devices, such as magnetic core memory, where electrical currents were used to introduce a permanent (but weak) magnetic field in some ferrous material, which could then be read to retrieve the data. Eventually, DRAM was introduced. A DRAM unit is a type of integrated circuit containing huge banks of an electronic component called a capacitor which can store an electrical charge for a period of time. The level of charge in a capacitor could be set to store information, and then measured to read the information when required.

I/O devices

I/O (short for input/output) is a general term for devices that send computers information from the outside world and that return the results of computations. These results can either be viewed directly by a user, or they can be sent to another machine, whose control has been assigned to the computer: In a robot, for instance, the controlling computer's major output device is the robot itself.

The first generation of computers were equipped with a fairly limited range of input devices. A punch card reader, or something similar, was used to enter instructions and data into the computer's memory, and some kind of printer, usually a modified teletype, was used to record the results. Over the years, other devices have been added. For the personal computer, for instance, keyboards and mice are the primary ways people directly enter information into the computer; and monitors are the primary way in which information from the computer is presented back to the user, though printers, speakers, and headphones are common, too. There is a huge variety of other devices for obtaining other types of input. One example is the digital camera, which can be used to input visual information. There are two prominent classes of I/O devices. The first class is that of secondary storage devices, such as hard disks, CD-ROMs, key drives and the like, which represent comparatively slow, but high-capacity devices, where information can be stored for later retrieval; the second class is that of devices used to access computer networks. The ability to transfer data between computers has opened up a huge range of capabilities for the computer. The global Internet allows millions of computers to transfer information of all types between each other.

Programs

Computer programs are simply lists of instructions for the computer to execute. These can range from just a few instructions which perform a simple task, to a much more complex instruction list which may also include tables of data. Many computer programs contain millions of instructions, and many of those instructions are executed repeatedly. A typical modern PC (in the year 2005) can execute around 3 billion instructions per second. Computers do not gain their extraordinary capabilities through the ability to execute complex instructions. Rather, they do millions of simple instructions arranged by people known as programmers.

In practice, people do not normally write the instructions for computers directly in machine language. Such programming is time-consuming and error-prone, making programmers less productive. Instead, programmers describe the desired actions in a "high level" programming language which is then translated into the machine language automatically by special computer programs (interpreters and compilers). Some programming languages map very closely to the machine language, such as Assembly Language (low level languages); at the other end, languages like Prolog are based on abstract principles far removed from the details of the machine's actual operation (high level languages). The language chosen for a particular task depends on the nature of the task, the skill set of the programmers, tool availability and, often, the requirements of the customers (for instance, projects for the US military were often required to be in the Ada programming language).

Computer software is an alternative term for computer programs; it is a more inclusive phrase and includes all the ancillary material accompanying the program needed to do useful tasks. For instance, a video game includes not only the program itself, but also data representing the pictures, sounds, and other material needed to create the virtual environment of the game. A computer application is a piece of computer software provided to many computer users, often in a retail environment. The stereotypical modern example of an application is perhaps the office suite, a set of interrelated programs for performing common office tasks.

Going from the extremely simple capabilities of a single machine language instruction to the myriad capabilities of application programs means that many computer programs are extremely large and complex. A typical example is Windows XP, created from roughly 40 million lines of computer code in the C++ programming language;[6] there are many projects of even bigger scope, built by large teams of programmers. The management of this enormous complexity is key to making such projects possible; programming languages, and programming practices, enable the task to be divided into smaller and smaller subtasks until they come within the capabilities of a single programmer in a reasonable period.

Nevertheless, the process of developing software remains slow, unpredictable, and error-prone; the discipline of software engineering has attempted, with some success, to make the process quicker and more productive and improve the quality of the end product.

A problem or a model is computational if it is formalized in such way that can be transformed to the form of a computer program. Computationality is the serious research problem of humanistic, social and psychological sciences, for example, modern systemics, cognitive and socio-cognitive [7] approaches develop different attempts to the computational specification of their "soft" knowledge.

Libraries and operating systems

Soon after the development of the computer, it was discovered that certain tasks were required in many different programs; an early example was computing some of the standard mathematical functions. For the purposes of efficiency, standard versions of these were collected in libraries and made available to all who required them. A particularly common task set related to handling the gritty details of "talking" to the various I/O devices, so libraries for these were quickly developed.

By the 1960s, with computers in wide industrial use for many purposes, it became common for them to be used for many different jobs within an organization. Soon, special software to automate the scheduling and execution of these many jobs became available. The combination of managing "hardware" and scheduling jobs became known as the "operating system"; the classic example of this type of early operating system was OS/360 by IBM.[8]

The next major development in operating systems was timesharing — the idea that multiple users could use the machine "simultaneously" by keeping all of their programs in memory, executing each user's program for a short time so as to provide the illusion that each user had their own computer. Such a development required the operating system to provide each user's programs with a "virtual machine" such that one user's program could not interfere with another's (by accident or design). The range of devices that operating systems had to manage also expanded; a notable one was hard disks; the idea of individual "files" and a hierarchical structure of "directories" (now often called folders) greatly simplified the use of these devices for permanent storage. Security access controls, allowing computer users access only to files, directories and programs they had permissions to use, were also common.

Another major addition to the operating system was tools to provide programs with a standardized graphical user interface. While there are few technical reasons why a GUI has to be tied to the rest of an operating system, it allows the operating system vendor to encourage all the software for their operating system to have a similar looking and acting interface.

With the rise of the Internet most operating systems have a TCP/IP networking stack. Just as hard disks induced operating systems to come up with storage abstractions of files and directories, the network hardware induced abstractions such as sockets and URLs. The regular connection of computers to the Internet raised the importance of security in Operating Systems and as a result operating systems have had to adopt firewall, encryption, update and runtime protection functionalities.

Outside these "core" functions, operating systems are usually shipped with an array of other tools, some of which may have little connection with these original core functions but have been found useful by enough customers for a provider to include them. For instance, Apple's Mac OS X ships with a digital video editor application.

Operating systems for smaller computers may not provide all of these functions. The operating systems for early microcomputers with limited memory and processing capability did not, and Embedded computers typically have specialized operating systems or no operating system at all, with their custom application programs performing the tasks that might otherwise be delegated to an operating system.

Alternative computing models

Despite the massive gains in speed and capacity over the history of the digital computer, there are many tasks for which current computers are inadequate. For some of these tasks, conventional computers are fundamentally inadequate, because the time taken to find a solution grows very quickly as the size of the problem to be solved expands. Therefore, there has been research interest in some computer models that use biological processes, or the oddities of quantum physics, to tackle these types of problems. For instance, DNA computing is proposed to use biological processes to solve certain problems. Because of the exponential division of cells, a DNA computing system could potentially tackle a problem in a massively parallel fashion. However, such a system is limited by the maximum practical mass of DNA that can be handled.

Quantum computers, as the name implies, take advantage of the unusual world of quantum physics. If a practical quantum computer is ever constructed, there are a limited number of problems for which the quantum computer is fundamentally faster than a standard computer. However, these problems, relating to cryptography and, unsurprisingly, quantum physics simulations, are of considerable practical interest.

These alternative models for computation remain research projects at the present time, and will likely find application only for those problems where conventional computers are inadequate.

Other types of computers

- Analog computer

- Chemical computer

- DNA computer

- Human computer

- Molecular nanotechnology

- Optical computer

- Quantum computer

- Wetware computer

See also Unconventional computing.

- ↑ Unknown (Unknown). [http://www.sandpile.org/ia32/opc_1.htm IA-32 architecture one byte opcodes]. sandpile.org. Retrieved on 2006-04-09.

- ↑ Kanellos, Michael (2005). Intel: 15 dual-core projects under way. CNET Networks, Inc.. Retrieved on 2006-07-15.

- ↑ Chen, Anne (2006). Laptops Leap Forward in Power and Battery Life. Ziff Davis Publishing Holdings Inc.. Retrieved on 2006-07-15.

- ↑ The last of the first : CSIRAC : Australia's first computer, Doug McCann and Peter Thorne, ISBN 0-7340-2024-4.

- ↑ Thon, Harald and Topel, Bert (January 16, 2006). Will Core Duo Notebooks Trade Battery Life For Quicker Response?. Tom's Hardware. Retrieved on 2006-04-09.

- ↑ Tanenbaum, Andrew S. Modern Operating Systems (2nd ed.). Prentice Hall. ISBN 0-13-092641-8.

- ↑ Gadomski Adam Maria (1993). TOGA Meta-theory. ENEA. Retrieved on 2006-07-24.

- ↑ System/360 Announcement,IBM Data Processing Division (April 7, 1964) [url=http://www-03.ibm.com/ibm/history/exhibits/mainframe/mainframe_PR360.html]