Evidence-based medicine

Evidence-based medicine is "the conscientious, explicit and judicious use of current best evidence in making decisions about the care of individual patients.".[1] Alternative definitions are "the process of systematically finding, appraising, and using contemporaneous research findings as the basis for clinical decisions"[2] or "evidence-based medicine (EBM) requires the integration of the best research evidence with our clinical expertise and our patient's unique values and circumstances."[3] Better known as EBM, evidence based medicine emerged in the early 1990's to help healthcare providers and policy makers evaluate the efficacy of different treatments.

Evidence-based practice is not restricted to medicine; dentistry, nursing and other allied health science are adopting "evidence-based medicine" as well as alternative medical approaches, such as acupuncture[4][5]. Evidence-Based Health Care or evidence-based practice extends the concept of EBM to all health professions, including management[6][7] and policy[8][9][10].

Classification

Two types of evidence-based medicine have been proposed.[11] Evidence-based guidelines is EBM at the organizational or institutional level, and involves producing guidelines, policy, and regulations. Evidence-based individual decision making is EBM as practiced by an individual health care provider when treating an individual patient. There is concern that evidence-based medicine focuses excessively on the physician-patient dyad and as a result missing many opportunities to improve healthcare.[11]

Why do we need EBM?

It is easy to assume that physicians always use scientific evidence conscientiously and judiciously in treating patients. In fact, most of the specific practices of physicians and surgeons are based on traditional techniques learned from their mentors in the care of patients during training. Additional modifications come from personal clinical experience, from information in the medical literature and continuing education courses. Although these practices almost always have a rational basis in biology, the actual efficacy of treatments is rarely tested by experimental trials in people. Further, even when the results of experimental trials or other evidence have been reported, there is a lag time between the acceptance of changes to medical practice and establishing them as routine in clinical care. EBM seeks to address these issues by promoting practices that have been shown to have validity using the scientific method.

Steps in EBM

| The 5 S search strategy[12]

Studies: original research studies that can be found using search engines like PubMed. Synthesis: systematic reviews or Cochrane Reviews. Synopses: in EBM journals, ACP Journal club etc. give brief descriptions of original articles and reviews as they appear . Summaries: in EBM textbooks integrate the best available evidence from syntheses to give evidence-based management options for a health problem. Systems: e.g. computerized decision support systems that link individual patient characteristics to relevant evidence. |

Ask

"Ask" - Formulate a well-structured clinical question, i.e. one that is directly relevant to the identified problem, and which is constructed in a way that facilitates searching for an answer. The question should have four elements: the patient or problem; the medical intervention or exposure (e.g., a cause for a disease); the comparison intervention or exposure; and the clinical outcomes. The better focused the question is, the more relevant and specific the search for evidence will be.

Acquisition of evidence

The ability to "acquire" evidence in a timely manner may improve healthcare.[13] Unfortunately, doctors may be led astray when acquiring information if they do not systematically review all available evidence, because individual trials may be flawed or their outcomes may not be fully representative.[14] One proposed structure for a comprehensive evidence search is the 5S search strategy,[12] which starts with the search of "summaries" (textbooks). [15]

Appraisal of evidence

|

The U.S. Preventive Services Task Force (USPSTF) [16] grades its recommendations for treatments according to the strength of the evidence and the expected overall benefit (benefits minus harms). Its grades are: A.— Good evidence that the treatment improves health outcomes, and benefits substantially outweigh harms. B.— Fair evidence that the treatment improves health outcomes and that benefits outweigh harms. C.— Fair evidence that the treatment can improve health outcomes but the balance of benefits and harms is too close to justify a general recommendation. D.— Recommendation against routinely providing the treatment to asymptomatic patients. There is fair evidence that [the service] is ineffective or that harms outweigh benefits. I.— The evidence is insufficient to recommend for or against routinely providing [the service]. Evidence that the treatment is effective is lacking, of poor quality, or conflicting and the balance of benefits and harms cannot be determined. The USPSTF also grades the quality of the overall evidence as good, fair or poor: Good: Evidence includes consistent results from well-designed, well-conducted studies in representative populations that directly assess effects on health. Fair: Evidence is sufficient to determine effects on health outcomes, but its strength is limited by the number, quality, or consistency of the individual studies, generalizability to routine practice, or indirect nature of the evidence on health outcomes. Poor: Evidence is insufficient to assess the effects on health outcomes because of limited number or power of studies, flaws in their design or conduct, gaps in the chain of evidence, or lack of information on important health outcomes. |

To "Appraise" the quality of the evidence is very important, as one third of the results of even the most visible medical research is eventually either attenuated or refuted.[17] There are many reasons for this[18]; two of the most common being publication bias[19] and conflict of interest[20] (see article on Medical ethics). These two problems interact, as conflict of interest often leads to publication bias.[21][19]

Complicating the appraisal process further, many (if not all) studies contain some flaws in their design, and even when there are no clear methodological flaws, any outcome of a test that is evaluated by statistical test has a margin of error: this means that some positive outcomes will be "false positives".

Publication bias

Whether a treatment or medical intervention is effective or not may be judged either by the experience of the practising physician, or by what has been published by others. The publications with greatest authority are generally those in the peer-reviewed scientific journals, and particularly those journals with the highest standards of editorial scrutiny. However, it is not simple to get a study published in any peer-reviewed journal, least of all in the best journals. Accordingly, many studies go unreported. It is often thought to be difficult to publish small studies, the outcome of which conflicts with the reported outcomes of larger previously published studies, or to publish studies from which no clear conclusion can be drawn. In part, this reflects the wish of the best journals to publish influential papers, and in part it reflects authors choosing not to publish studies that are thought to be uninteresting. Such publication bias can be difficult to recognise, but its effects tend to encourage publication of studies that support an already formed conclusion, while discouraging publication of contradictory or equivocal findings.[19][22] Publication bias may be more common in industry sponsored research.[23]

In performing a meta-analyses, a file drawer[24] or a funnel plot analysis[25][26] may help detect underlying publication bias among the studies in the meta-analysis.

Conflict of interest

The presence of a conflict of interest has many effects in medical publishing. See Medical ethics: conflict of interests for more information.

Statistical analysis

Statistical analysis of the outcomes of a clinical trial is a complex and highly technical process that often requires a professional medical statistician to advise on the design of the trial as well as on the analysis of its outcome. Flaws in the design of a trial can lead to weaknesses in statistical analysis. Ideally, a trial should be carefully designed with statistical issues in mind and with the hypothesis under test clearly formulated, and the agreed protocol should then be strictly adhered to. Often however problems arise during the test; for example, there may be unanticipated outcomes, or problems in patient recruitment or in compliance with the test protocol, and these problems can weaken the power and authority of the trial. Common problems include small sample sizes in some of the groups[27], problems of "multiple comparisons" when several outcomes are being assessed, and biasing of study populations by selection criteria.

The outcome of a study is often summarised by a "P-value" that expresses the likelihood that an observed difference between treatment groups reflects a true difference in treatment effectiveness; the P value is a statistical calculation of the chance that the observed apparent difference reflects the chance outcome of random sampling. Some have argued that focussing on P values neglects other important sources of knowledge and information that should properly be used to assess the likely efficacy of a treatment [28] In particular, some argue that the P-value should be interpreted in light of how plausible is the hypothesis based on the totality of prior research and physiologic knowledge.[29][28][30]

Application

It is important to "apply" the best practices found to the correct situation. One of the common problems in applying evidence are difficulties with numeracy. Both patients and healthcare professionals have difficulties with health numeracy and probabilistic reasoning.[31] A second problem is to recognise the patient population that will benefit from the new practices. Extrapolating study results to the wrong patient populations (over-generalization)[32][33][34] and not applying study results to the correct population (under-utilization)[35][36] can both increase adverse outcomes.

The problem in over-generalization of study results may be more common among specialist physicians.[37] Two studies found specialists were more likely to adopt cyclooxygenase 2 inhibitor drugs before the drug rofecoxib was withdrawn by its manufacturers after it emerged that its use had unanticipated adverse effects [38][39]. One of the studies went on to state:

- "using COX-2s as a model for physician adoption of new therapeutic agents, specialists were more likely to use these new medications for patients likely to benefit but were also significantly more likely to use them for patients without a clear indication".[39]

Similarly, orthopedists provide more expensive care for back pain, but without measurably increased benefit compared to other types of practitioners.[40] Some of the reason that subspecialists may be more likely to adopt inadequately studied innovations is that they read from a spectrum of journals that have less consistent quality.[41]

The problem of under-utilizing study results may be more common when physicians are practicing outside of their expertise. For example, specialist physicians are less likely to under-utilize specialty care[42][43], while primary care physicians are less likely to under-utilize preventive care[44][45].

Metrics used in EBM

Diagnosis

- Sensitivity and specificity

- Likelihood ratios (Odds ratios)

Interventions

Relative measures

- Relative risk ratio

- Relative risk reduction

Absolute measures

- Absolute risk reduction

- Number needed to treat

- Number needed to screen

- Number needed to harm

Health policy

- Cost per year of life saved[46]

- Years (or months or days) of life saved. "A gain in life expectancy of a month from a preventive intervention targeted at populations at average risk and a gain of a year from a preventive intervention targeted at populations at elevated risk can both be considered large."[47]

Experimental trials: producing the evidence

|

In the treatment of the sick person, the physician must be free to use a new diagnostic and therapeutic measure, if in his or her judgement it offers hope of saving life, re-establishing health or alleviating suffering. The potential benefits, hazards and discomfort of a new method should be weighed against the advantages of the best current diagnostic and therapeutic methods. In any medical study, every patient- including those of a control group, if any- should be assured of the best proven diagnostic and therapeutic method. The physician can combine medical research with professional care, the objective being the acquisition of new medical knowledge,only to the extent that medical research is justified by its potential diagnostic or therapeutic value for the patient. From The Declaration of Helsinki [48] |

"A clinical trial is defined as a prospective scientific experiment that involves human subjects in whom treatment is initiated for the evaluation of a therapeutic intervention. In a randomized controlled clinical trial, each patient is assigned to receive a specific treatment intervention by a chance mechanism."[49] The theory behind these trials is that the value of a treatment will be shown in an objective way, and, though usually unstated, there is an assumption that the results of the trial will be applicable to the care of patients who have the condition that was treated.

The best evidence is thought to come from large multicentre clinical trials that are randomised and placebo-controlled, and which are conducted double-blind according to a predetermined schedule that is strictly adhered to. Trials should be large, so that serious adverse events might be detected even when they occur rarely. Multi-centre trials minimise problems that can arise when a single geographical locus has a population that is not fully representative of the global population, and they can minimise the effect of geographical variations in environment and health care delivery. Randomisation (if the study population is large enough) should mean that the study groups are unbiased. A double-blind trial is one in which neither the patient nor the deliverer of the treatment is aware of the nature of the treatment offered to any particular individual, and this avoids bias caused by the expectations of either the doctor or the patient. Placebo controls are important, because the placebo effect can often be very strong.

However such trials are very expensive, difficult to co-ordinate properly, and are often impractical to design optimally. For example, for many types of medical intervention, no satisfactory placebo treatment is possible. For several medical interventions, the use of a placebo, although feasible, is considered unethical (see section on Unethical use of placebos).

In practice, Randomized controlled trials are available to support only 21%[50] to 53%[51] of principle therapeutic decisions.[52] Due to this, evidence-based medicine has evolved to accept lesser levels of evidence when randomized controlled trials are not available.[53]

Sackett, one of the founders of EBM, recognized that large-scale trials were not conducted for many conditions (see section below), and that it might not be possible to conduct them. Underlining the difficulty in extrapolating from large trials, Sackett proposed the use of N of 1 randomized controlled trials (also called single-subject randomized trials). In these, the patient is both the treatment group and the placebo group, but at different times. Blinding must be done with the collaboration of the pharmacist, and treatment effects must appear and dissapear quickly following introduction and cessation of the therapy. This type of trial can be performed for many chronic, stable conditions.[54] The individualized nature of the single-subject randomized trial, and the fact that it often requires the active participation of the patient (questionnaires, diaries), appeals to the patient and promotes better insight and self-management[55][56] as well as patient safety,[57] in a cost-effective manner.

Summarizing the evidence

Systematic review

A systematic review is a summary of healthcare research that involves a thorough literature search and critical appraisal of individual studies to identify the valid and applicable evidence. It often, but not always, uses appropriate techniques (meta-analysis) to combine these valid studies, and may grade the quality of the particular pieces of evidence according to the methodology used, and according to strengths or weaknesses of thstudy design.

While many systematic reviews are based on an explicit quantitative meta-analysis of available data, there are also qualitative reviews which nonetheless adhere to the standards for gathering, analyzing and reporting evidence.

Clinical practice guidelines

Clinical practice guidelines are defined as "Directions or principles presenting current or future rules of policy for assisting health care practitioners in patient care decisions regarding diagnosis, therapy, or related clinical circumstances. The guidelines may be developed by government agencies at any level, institutions, professional societies, governing boards, or by the convening of expert panels. The guidelines form a basis for the evaluation of all aspects of health care and delivery."[58]

Incorporating evidence into clinical care

Medical informatics

Practicing clinicians cite the lack of time for keeping up with emerging medical evidence that may change clinical practice.[59] Medical informatics is an essential adjunct to EBM, and focuses on creating tools to access and apply the best evidence for making decisions about patient care.[3]

Before practicing EBM, informaticians (or informationists) must be familiar with medical journals, literature databases, medical textbooks, practice guidelines, and the growing number of other dedicated evidence-based resources, like the Cochrane Database of Systematic Reviews and Clinical Evidence.[59]

Similarly, for practicing medical informatics properly, it is essential to have an understanding of EBM, including the ability to phrase an answerable question, locate and retrieve the best evidence, and critically appraise and apply it.[60][61]

Teaching evidence-based medicine

Criticisms of EBM

There are a number of criticisms of EBM.[62][63] Most generally, EBM has been criticized as an attempt to define knowledge in medicine in the same way that was done unsuccessfully by the logical positivists in epistemology, "trying to establish a secure foundation for scientific knowledge based only on observed facts".[64]

A general problem with EBM is that it seeks to make recommendations for treatment that (on balance) are likely to provide the best treatment for most patients. However what is the best treatment for most patients is not necessarily the best treatment for a particular individual patient. The causes of disease, and the patient responses to treatment all vary considerably, and are affected for example by the individual's genetic make-up, their particular history, and by factors of individual lifestyle. To take these properly into account requires the clinical experience of the treating physician, and over-reliance upon recommendations based upon statistical outcomes of treatments given in a standardised way to large populations may not always lead to the best care for a particular individual.

Unethical use of placebos

Ideally, the effectiveness of any medical treatment should be compared with that of a placebo in a double-blind trial, where neither the patient nor the doctor is aware of whether the treatment administered is an active treatment or an inert placebo. Placebo controls are thought to be important because of the considerable "power of suggestion". However, as stated in The Declaration of Helsinki by the World Medical Association it is unethical to give any patient a placebo treatment if an existing treatment option is known to be beneficial.[65][66] Many scientists and ethicists consider that the U.S. Food and Drug Administration, by demanding placebo-controlled trials, encourages the systematic violation of the Declaration of Helsinki.[67] The use of placebo controls remains a convenient way to avoid direct comparisons with a competing drug.

As EBM evolves, appropriate use of placebo is being revised.[68][69] When guidelines suggest a placebo is an unethical control, then an "active-control noninferiority trial" may be used.[70] To establish non-inferiority, the following three conditions should be - but frequently are not - established:[70]

- "The treatment under consideration exhibits therapeutic noninferiority to the active control."

- "The treatment would exhibit therapeutic efficacy in a placebo-controlled trial if such a trial were to be performed."

- "The treatment offers ancillary advantages in safety, tolerability, cost, or convenience."

Ulterior motives

An early criticism of EBM is that it will be a guise for rationing resources or other goals that are not in the interest of the patient.[71][72] In 1994, the American Medical Association helped introduce the "Patient Protection Act" in Congress to reduce the power of insurers to use guidelines to deny payment for a medical services.[73]

As a possible example, Milliman Care Guidelines state they produce "evidence-based clinical guidelines since 1990".[74] In 2000, an academic pediatrician sued Milliman for using his name as an author on a practice guidelines that he stated were "dangerous" [75][76][77] A similar suit disputing the origin of care decisions at Kaiser has been filed.[78] The outcomes of both suits are not known.

Conversely, clinical practice guidelines by the Infectious Disease Society of America are being investigated by Connecticut's attorney general on grounds that the guidelines, which do not recognize a chronic form of Lyme disease, are anticompetitive.[79][80]

EBM not recognizing the limits of clinical epidemiology

EBM is a set of techniques derived from clinical epidemiology, but a common criticism of epidemiology is that it can show association, but not causation. While clinical epidemiology has its role in inspiring clinical decisions, if it is complemented with testable hypotheses on disease,[81] many critics consider that EBM is a form of clinical epidemiology which became so prevalent in health care systems, and imposed such an empiricist bias on medical research, that it has undermined the very notion of causal inference in clinical practice.[82] It is argued that it has even become condemnable to use common sense,[83] as was cleverly illustrated in a systematic review of randomized controlled trials studying the effects of parachutes against gravitational challenges (free falls).[84]

Fallibility of knowledge

EBM has been criticized on epistemologic grounds as "trying to establish a secure foundation for scientific knowledge based only on observed facts"[64] and not recognizing the fallible nature[85] of knowledge in general. The inevitable failure of reliance on empiric evidence as a foundation for knowledge was recognized over 100 years ago and is known as the "Problem of Induction" or "Hume's Problem".[86]

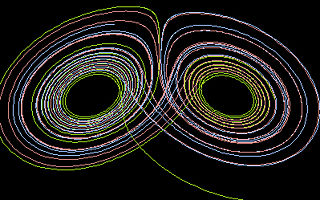

Complexity theory

Complexity theory and chaos theory are proposed as further explaining the nature of medical knowledge.[87][88] Regarding health services research, although complexity theory has not advanced to the state of being able to mathematically model healthcare delivery, it has been used as a framework for case study[89][90][91][92] and traditional bivariate analysis[93] of healthcare delivery. For example, a systematic review of organizational interventions to improve the quality of care of diabetes mellitus type 2 suggests that interventions based on complexity theory will be more successful.[93] If the goal of modeling healthcare is to comply with specific quality indicators, interventions based on systems theory may be more effective than those based on complexity theory.[94] Regarding basic science research, fractal patterns have been found in cardiac conduction and fluctuations in vital signs over time.[95] Fractals are evidence of a system that follows the non-linear mathematics of a complex adaptive system. That are many other examples of fractal patterns in nature including the prediction of weather patterns.[96]

References

- ↑ Sackett DL et al. (1996). "Evidence based medicine: what it is and what it isn't". BMJ 312: 71–2. PMID 8555924. [e]

- ↑ Evidence-Based Medicine Working Group (1992). "Evidence-based medicine. A new approach to teaching the practice of medicine. Evidence-Based Medicine Working Group". JAMA 268: 2420–5. PMID 1404801. [e]

- ↑ 3.0 3.1 Glasziou, Paul; Strauss, Sharon Y. (2005). Evidence-based medicine: how to practice and teach EBM. Elsevier/Churchill Livingstone. ISBN 0-443-07444-5.

- ↑ Manheimer E et al. (2007). "Meta-analysis: acupuncture for osteoarthritis of the knee". Ann Intern Med 146: 868–77. PMID 17577006. [e]

- ↑ Assefi NP et al. (2005). "A randomized clinical trial of acupuncture compared with sham acupuncture in fibromyalgia". Ann Intern Med 143: 10–9. PMID 15998750. [e]

- ↑ Clancy CM, Cronin K (2005). "Evidence-based decision making: global evidence, local decisions". Health affairs (Project Hope) 24: 151–62. DOI:10.1377/hlthaff.24.1.151. PMID 15647226. Research Blogging.

- ↑ Shojania KG, Grimshaw JM (2005). "Evidence-based quality improvement: the state of the science". Health affairs (Project Hope) 24: 138–50. DOI:10.1377/hlthaff.24.1.138. PMID 15647225. Research Blogging.

- ↑ Fielding JE, Briss PA (2006). "Promoting evidence-based public health policy: can we have better evidence and more action?". Health affairs (Project Hope) 25: 969–78. DOI:10.1377/hlthaff.25.4.969. PMID 16835176. Research Blogging.

- ↑ Foote SB, Town RJ (2007). "Implementing evidence-based medicine through medicare coverage decisions". Health affairs (Project Hope) 26 (6): 1634–42. DOI:10.1377/hlthaff.26.6.1634. PMID 17978383. Research Blogging.

- ↑ Fox DM (2005). "Evidence of evidence-based health policy: the politics of systematic reviews in coverage decisions". Health affairs (Project Hope) 24: 114–22. DOI:10.1377/hlthaff.24.1.114. PMID 15647221. Research Blogging.

- ↑ 11.0 11.1 Eddy DM (2005). "Evidence-based medicine: a unified approach". Health affairs (Project Hope) 24: 9-17. DOI:10.1377/hlthaff.24.1.9. PMID 15647211. Research Blogging.

- ↑ 12.0 12.1 Haynes RB (2006). "Of studies, syntheses, synopses, summaries, and systems: the "5S" evolution of information services for evidence-based health care decisions". ACP J Club 145: A8. PMID 17080967. [e]

- ↑ Banks DE et al. (2007). "Decreased hospital length of stay associated with presentation of cases at morning report with librarian support". Journal of the Medical Library Association : JMLA 95: 381–7. DOI:10.3163/1536-5050.95.4.381. PMID 17971885. Research Blogging.

- ↑ McKibbon KA, Fridsma DB (2006). "Effectiveness of clinician-selected electronic information resources for answering primary care physicians' information needs". Journal of the American Medical Informatics Association : JAMIA 13: 653–9. DOI:10.1197/jamia.M2087. PMID 16929042. Research Blogging.

- ↑ Patel MR et al. (2006). "Randomized trial for answers to clinical questions: evaluating a pre-appraised versus a MEDLINE search protocol". JMLA 94: 382–7. PMID 17082828. [e]

- ↑ U.S. Preventive Services Task Force Ratings: [Strength of Recommendations and Quality of Evidence. Guide to Clinical Preventive Services, Third Edition: Periodic Updates, 2000-2003. Hosted by Agency for Healthcare Research and Quality, Rockville, MD.

- ↑ Ioannidis JP (2005). "Contradicted and initially stronger effects in highly cited clinical research". JAMA 294: 218–28. DOI:10.1001/jama.294.2.218. PMID 16014596. Research Blogging.

- ↑ Ioannidis JP et al. (1998). "Issues in comparisons between meta-analyses and large trials". JAMA 279: 1089–93. PMID 9546568. [e]

- ↑ 19.0 19.1 19.2 Dickersin K et al. (1992). "Factors influencing publication of research results. Follow-up of applications submitted to two institutional review boards". JAMA 267: 374–8. PMID 1727960. [e]

Cite error: Invalid

<ref>tag; name "pmid1727960" defined multiple times with different content Cite error: Invalid<ref>tag; name "pmid1727960" defined multiple times with different content - ↑ Smith R (2005). "Medical journals are an extension of the marketing arm of pharmaceutical companies". PLoS Med 2: e138. DOI:10.1371/journal.pmed.0020138. PMID 15916457. Research Blogging.

- ↑ Melander H et al. (2003). "Evidence b(i)ased medicine--selective reporting from studies sponsored by pharmaceutical industry: review of studies in new drug applications". BMJ 326: 1171–3. DOI:10.1136/bmj.326.7400.1171. PMID 12775615. Research Blogging.

- ↑ Krzyzanowska MK et al. (2003). "Factors associated with failure to publish large randomized trials presented at an oncology meeting". JAMA 290: 495–501. DOI:10.1001/jama.290.4.495. PMID 12876092. Research Blogging.

- ↑ Lexchin J et al. (2003). "Pharmaceutical industry sponsorship and research outcome and quality: systematic review". BMJ 326: 1167–70. DOI:10.1136/bmj.326.7400.1167. PMID 12775614. Research Blogging.

- ↑ Pham B et al. (2001). "Is there a "best" way to detect and minimize publication bias? An empirical evaluation". Evaluation & the Health Professions 24 (2): 109–25. PMID 11523382. [e]

- ↑ Egger M et al. (1997). "Bias in meta-analysis detected by a simple, graphical test". BMJ 315: 629–34. PMID 9310563. [e]

- ↑ Terrin N et al. (2005). "In an empirical evaluation of the funnel plot, researchers could not visually identify publication bias". J Clin Epidemiol 58: 894–901. DOI:10.1016/j.jclinepi.2005.01.006. PMID 16085192. Research Blogging.

- ↑ Glasziou P, Doll H (2007). "Was the study big enough? Two "café" rules (Editorial)". ACP J Club 147: A08. PMID 17975858. [e]

- ↑ 28.0 28.1 Goodman SN (1999). "Toward evidence-based medical statistics. 1: The P value fallacy". Ann Intern Med 130: 995–1004. PMID 10383371. [e]

- ↑ Browner WS, Newman TB (1987). "Are all significant P values created equal? The analogy between diagnostic tests and clinical research". JAMA 257: 2459–63. PMID 3573245. [e]

- ↑ Goodman SN (1999). "Toward evidence-based medical statistics. 2: The Bayes factor". Ann Intern Med 130: 1005–13. PMID 10383350. [e]

- ↑ Ancker JS, Kaufman D (2007). "Rethinking Health Numeracy: A Multidisciplinary Literature Review". DOI:10.1197/jamia.M2464. PMID 17712082. Research Blogging.

- ↑ Gross CP et al. (2000). "Relation between prepublication release of clinical trial results and the practice of carotid endarterectomy". JAMA 284: 2886–93. PMID 11147985. [e]

- ↑ Juurlink DN et al. (2004). "Rates of hyperkalemia after publication of the Randomized Aldactone Evaluation Study". N Engl J Med 351: 543–51. DOI:10.1056/NEJMoa040135. PMID 15295047. Research Blogging.

- ↑ Beohar N et al. (2007). "Outcomes and complications associated with off-label and untested use of drug-eluting stents". JAMA 297: 1992–2000. DOI:10.1001/jama.297.18.1992. PMID 17488964. Research Blogging.

- ↑ Soumerai SB et al. (1997). "Adverse outcomes of underuse of beta-blockers in elderly survivors of acute myocardial infarction". JAMA 277: 115–21. PMID 8990335. [e]

- ↑ Hemingway H et al. (2001). "Underuse of coronary revascularization procedures in patients considered appropriate candidates for revascularization". N Engl J Med 344: 645–54. PMID 11228280. [e]

- ↑ Turner BJ, Laine C (2001). "Differences between generalists and specialists: knowledge, realism, or primum non nocere?". J Gen Intern Med 16: 422-4. DOI:10.1046/j.1525-1497.2001.016006422.x. PMID 11422641. Research Blogging. PubMed Central

- ↑ Rawson N et al. (2005). "Factors associated with celecoxib and rofecoxib utilization". Ann Pharmacother 39: 597-602. PMID 15755796.

- ↑ 39.0 39.1 De Smet BD et al. (2006). "Over and under-utilization of cyclooxygenase-2 selective inhibitors by primary care physicians and specialists: the tortoise and the hare revisited". J Gen Intern Med 21: 694-7. DOI:10.1111/j.1525-1497.2006.00463.x. PMID 16808768. Research Blogging.

- ↑ Carey T et al. (1995). "The outcomes and costs of care for acute low back pain among patients seen by primary care practitioners, chiropractors, and orthopedic surgeons. The North Carolina Back Pain Project". N Engl J Med 333: 913-7. PMID 7666878.

- ↑ McKibbon KA et al. (2007). "Which journals do primary care physicians and specialists access from an online service?". JMLA 95: 246-54. DOI:10.3163/1536-5050.95.3.246. PMID 17641754. Research Blogging.

- ↑ Majumdar S et al. (2001). "Influence of physician specialty on adoption and relinquishment of calcium channel blockers and other treatments for myocardial infarction". J Gen Intern Med 16: 351-9. PMID 11422631.

- ↑ Fendrick A, Hirth R, Chernew M (1996). "Differences between generalist and specialist physicians regarding Helicobacter pylori and peptic ulcer disease". Am J Gastroenterol 91: 1544-8. PMID 8759658.

- ↑ Lewis C et al. (1991). "The counseling practices of internists". Ann Intern Med 114: 54-8. PMID 1983933.

- ↑ Turner B et al.. "Breast cancer screening: effect of physician specialty, practice setting, year of medical school graduation, and sex". Am J Prev Med 8: 78-85. PMID 1599724.

- ↑ Tengs TO et al (1995). "Five-hundred life-saving interventions and their cost-effectiveness". Risk Anal 15: 369–90. PMID 7604170. [e]

- ↑ Wright JC, Weinstein MC (1998). "Gains in life expectancy from medical interventions--standardizing data on outcomes". N Engl J Med 339: 380–6. PMID 9691106. [e]

- ↑ World Medical Organization. (1996) Declaration of Helsinki. British Medical Journal 313:1448-1449. hosted at cirp.org

- ↑ Stanley K (2007). "Design of randomized controlled trials". Circulation 115 (9): 1164–9. DOI:10.1161/CIRCULATIONAHA.105.594945. PMID 17339574. Research Blogging.

- ↑ Michaud G et al. (1998). "Are therapeutic decisions supported by evidence from health care research?". Arch Intern Med 158: 1665–8. PMID 9701101. [e]

- ↑ Ellis J et al. (1995). "Inpatient general medicine is evidence based. A-Team, Nuffield Department of Clinical Medicine". Lancet 346: 407–10. PMID 7623571. [e]

- ↑ Booth A. Percentage of practice that is evidence based?. Retrieved on 2007-11-15.

- ↑ Haynes RB (2006). "Of studies, syntheses, synopses, summaries, and systems: the "5S" evolution of information services for evidence-based healthcare decisions". Evidence-based Medicine 11: 162–4. DOI:10.1136/ebm.11.6.162-a. PMID 17213159. Research Blogging.

- ↑ Guyatt G et al (1988). "A clinician's guide for conducting randomized trials in individual patients". CMAJ : Canadian Medical Association journal 139: 497–503. PMID 3409138. [e]

- ↑ Brookes ST et al. (2007). ""Me's me and you's you": Exploring patients' perspectives of single patient (n-of-1) trials in the UK". Trials 8: 10. DOI:10.1186/1745-6215-8-10. PMID 17371593. Research Blogging.

- ↑ Langer JC et al. (1993). "The single-subject randomized trial. A useful clinical tool for assessing therapeutic efficacy in pediatric practice". Clinical Pediatrics 32: 654–7. PMID 8299295. [e]

- ↑ Mahon J et al. (1996). "Randomised study of n of 1 trials versus standard practice". BMJ 312: 1069–74. PMID 8616414. [e]

- ↑ National Library of Medicine. Clinical practice guidelines. Retrieved on 2007-10-19.

- ↑ 59.0 59.1 Mendelson D, Carino TV (2005). "Evidence-based medicine in the United States--de rigueur or dream deferred?". Health Affairs (Project Hope) 24: 133–6. DOI:10.1377/hlthaff.24.1.133. PMID 15647224. Research Blogging.

- ↑ Hersh W (2002). "Medical informatics education: an alternative pathway for training informationists". JMLA 90: 76–9. PMID 11838463. [e]

- ↑ Shearer BS et al. (2002). "Bringing the best of medical librarianship to the patient team". JMLA 90: 22–31. PMID 11838456. [e]

- ↑ Straus S et al. (2007). "Misunderstandings, misperceptions, and mistakes". Evidence-based medicine 12 (1): 2–3. DOI:10.1136/ebm.12.1.2-a. PMID 17264255. Research Blogging.

- ↑ Straus SE, McAlister FA (2000). "Evidence-based medicine: a commentary on common criticisms". CMAJ : Canadian Medical Association journal 163: 837–41. PMID 11033714. [e]

- ↑ 64.0 64.1 Goodman SN (2002). "The mammography dilemma: a crisis for evidence-based medicine?". Ann Intern Med 137: 363–5. PMID 12204023. [e]

Cite error: Invalid

<ref>tag; name "pmid12204023" defined multiple times with different content - ↑ World Medical Association. Declaration of Helsinki: Ethical Principles for Medical Research Involving Human Subjects. Retrieved on 2007-11-17.

- ↑ (1997) "World Medical Association declaration of Helsinki. Recommendations guiding physicians in biomedical research involving human subjects". JAMA 277: 925–6. PMID 9062334. [e]

- ↑ Michels KB, Rothman KJ (2003). "Update on unethical use of placebos in randomised trials". Bioethics 17: 188–204. PMID 12812185. [e]

- ↑ Temple R, Ellenberg SS (2000). "Placebo-controlled trials and active-control trials in the evaluation of new treatments. Part 1: ethical and scientific issues". Ann Intern Med 133: 455–63. PMID 10975964. [e]

- ↑ Ellenberg SS, Temple R (2000). "Placebo-controlled trials and active-control trials in the evaluation of new treatments. Part 2: practical issues and specific cases". Ann Intern Med 133: 464–70. PMID 10975965. [e]

- ↑ 70.0 70.1 Kaul S, Diamond GA (2006). "Good enough: a primer on the analysis and interpretation of noninferiority trials". Ann Intern Med 145: 62–9. PMID 16818930. [e]

- ↑ Grahame-Smith D (1995). "Evidence based medicine: Socratic dissent". BMJ 310: 1126–7. PMID 7742683. [e]

- ↑ Formoso G et al. (2001). "Practice guidelines: useful and "participative" method? Survey of Italian physicians by professional setting". Arch Intern Med 161: 2037–42. PMID 11525707. [e]

- ↑ Pear, R. A.M.A. and Insurers Clash Over Restrictions on Doctors - New York Times. Retrieved on 2007-11-14.

- ↑ Evidence-Based Clinical Guidelines by Milliman Care Guidelines. Retrieved on 2007-11-14.

- ↑ Nissimov, R (2000). Cost-cutting guide used by HMOs called `dangerous' / Doctor on UT-Houston Medical School staff sues publisher. Houston Chronicle. Retrieved on 2007-11-14.

- ↑ Nissimov, R (2000). Judge tells firm to explain how pediatric rules derived. Houston Chronicle. Retrieved on 2007-11-14.

- ↑ Martinez, B (2000). Insurance Health-Care Guidelines Are Assailed for Putting Patients Last. Wall Street Journal.

- ↑ Colliver, V (1/07/2002). Lawsuit disputes truth of Kaiser Permanente ads. San Francisco Chronicle. Retrieved on 2007-11-14.

- ↑ Warner, S (2/7/2007). The Scientist : State official subpoenas infectious disease group. Retrieved on 2007-11-14.

- ↑ Gesensway, D (2007). ACP Observer, January-February 2007 - Experts spar over treatment for 'chronic' Lyme disease. Retrieved on 2007-11-14.

- ↑ Djulbegovic B et al. (2000). "Evidentiary challenges to evidence-based medicine". Journal of evaluation in clinical practice 6: 99–109. PMID 10970004. [e]

- ↑ Charlton BG. [Book Review: Evidence-based medicine: how to practice and teach EBM by Sackett DL, Richardson WS, Rosenberg W, Haynes RB. http://www.hedweb.com/bgcharlton/journalism/ebm.html] Journal of Evaluation in Clinical Practice. 1997; 3:169-172

- ↑ Michelson J (2004). "Critique of (im)pure reason: evidence-based medicine and common sense". Journal of evaluation in clinical practice 10 (2): 157–61. DOI:10.1111/j.1365-2753.2003.00478.x. PMID 15189382. Research Blogging.

- ↑ Smith GC, Pell JP (2003). "Parachute use to prevent death and major trauma related to gravitational challenge: systematic review of randomised controlled trials". BMJ 327: 1459–61. DOI:10.1136/bmj.327.7429.1459. PMID 14684649. Research Blogging.

- ↑ Upshur RE (2000). "Seven characteristics of medical evidence". Journal of evaluation in clinical practice 6 (2): 93–7. PMID 10970003. [e]

- ↑ Vickers, J (2006). The Problem of Induction (Stanford Encyclopedia of Philosophy). Stanford Encyclopedia of Philosophy. Retrieved on 2007-11-16.

- ↑ Sweeney, Kieran (2006). Complexity in Primary Care: Understanding Its Value. Abingdon: Radcliffe Medical Press. ISBN 1-85775-724-6. Review

- ↑ Holt, Tim A (2004). Complexity for Clinicians. Abingdon: Radcliffe Medical Press. ISBN 1-85775-855-2. Review, ACP Journal Club Review

- ↑ Anderson RA et al. (2005). "Case study research: the view from complexity science". Qualitative Health Res 15: 669–85. DOI:10.1177/1049732305275208. PMID 15802542. Research Blogging.

- ↑ Miller WL et al. (2001). "Practice jazz: understanding variation in family practices using complexity science". Journal of Family Practice 50: 872–8. PMID 11674890. [e]

- ↑ Crabtree BF et al. (2001). "Understanding practice from the ground up". Journal of Family Practice 50: 881–7. PMID 11674891. [e]

- ↑ Sturmberg JP (2007). "Systems and complexity thinking in general practice: part 1 - clinical application". Australian Family Physician 36: 170–3. PMID 17339983. [e]

- ↑ 93.0 93.1 Leykum LK et al. (2007). "Organizational interventions employing principles of complexity science have improved outcomes for patients with Type II diabetes". Implementation Science : IS 2: 28. DOI:10.1186/1748-5908-2-28. PMID 17725834. Research Blogging.

- ↑ Rhydderch M et al. (2004). "Organisational change theory and the use of indicators in general practice". Quality & Safety in Health Care 13: 213–7. DOI:10.1136/qhc.13.3.213. PMID 15175493. Research Blogging.

- ↑ Goldberger AL (1996). "Non-linear dynamics for clinicians: chaos theory, fractals, and complexity at the bedside". Lancet 347 (9011): 1312–4. PMID 8622511. [e] Full text at Ebsco

- ↑ Lorenz's Model of Convection. Retrieved on 2007-11-21.

See also

- Pages with reference errors

- Pages using duplicate arguments in template calls

- Pages using PMID magic links

- Pages using ISBN magic links

- Editable Main Articles with Citable Versions

- CZ Live

- Health Sciences Workgroup

- Articles written in American English

- Advanced Articles written in American English

- All Content

- Health Sciences Content

- Pages with too many expensive parser function calls